· retrotech · 6 min read

Ask Jeeves vs. Google: A Nostalgic Showdown of Search Philosophies

A walk down the memory lane where a genteel butler named Jeeves invited you to ask questions in full sentences, and a stark white box called Google taught the web to be ranked. This piece compares the human-facing, conversational philosophy of Ask Jeeves with Google’s algorithmic meritocracy, traces how they reflected cultural currents of their era, and teases out what the duel tells us about the future of search.

I remember typing: “Why does my cat knead the blanket?”

The screen answered with a polite illustration of a butler clearing his throat and a list of links. The experience felt like talking to someone-an imagined, impeccably dressed household aide who would translate messy human curiosity into tidy results. That was Ask Jeeves.

A few years later I typed: “cat kneading blanket” into a single white box and instantly received results ranked by a whisper of the web’s collective judgment. No butler. No pleasantries. Just a machine that seemed to know which pages mattered. That was Google.

This is not merely nostalgia for branding. It’s a conflict between two search philosophies-one built around the human question, the other around algorithmic judgment-and each tells us something about the internet, business, and culture at the moments they rose.

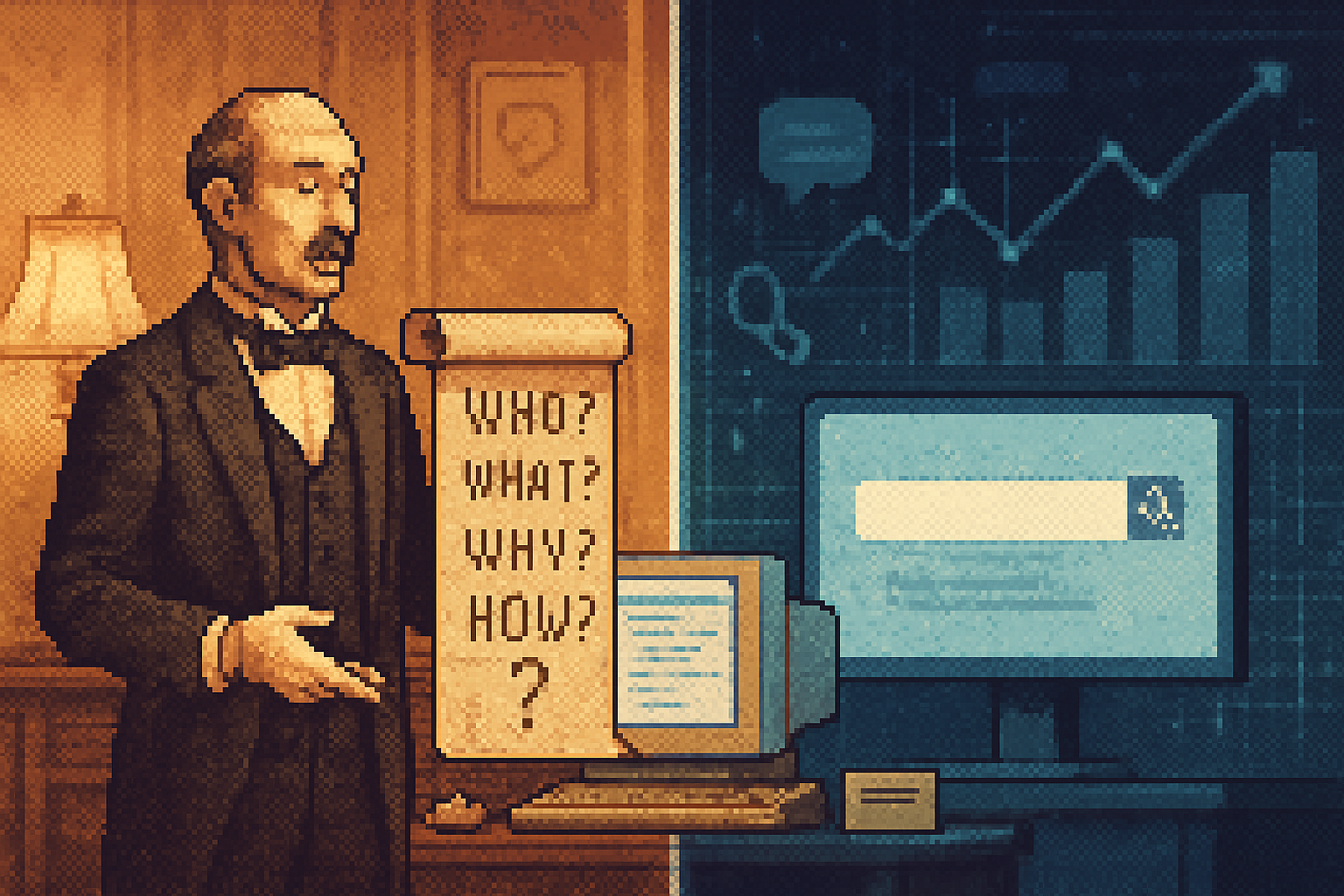

The two philosophies, in plain English

Ask Jeeves - design as translation. Ask’s proposition was simple and ingenuous: users should be able to ask search engines real questions in natural language, and the system should understand them. The interface anthropomorphized the machine-Jeeves, the butler-inviting conversational queries and promising a helpful hand that would interpret intent and serve answers politely. See Ask.com’s history for details [

Google - trust the links, not the manners. Google arrived with an algorithmic premise: the structure of the web-its hyperlinks-encoded collective judgment. A page linked by many important pages is probably important itself. Rank pages by that signal and you get better results. The idea is formalized in the PageRank foundation paper and in the early vision of Google as an engine of objective relevance

One philosophy prioritized human-facing interaction; the other prioritized extracting a mechanical measure of relevance from the web’s topology.

Why Ask Jeeves felt right for the 1990s

The late 1990s were a time of exuberant optimism about interfaces. The web was a newcomer; users were novices. A design that read like a friendly conversation soothed anxieties. Asking full questions-”Where can I find a cheap digital camera?”-was natural, even elegiac: humans talk in sentences.

Ask Jeeves rode two cultural currents:

- The personalization fetish - the belief that the computer should be a companion. Anthropomorphic agents (Clippy, remember?) promised a friendlier user experience.

- The usability problem - early web search was noisy. Helping users phrase queries seemed like a high-leverage fix.

The butler metaphor was brilliant marketing. It placed the machine inside a social script: you asked a servant, who then did the legwork. The interface minimized cognitive load and made the web feel domesticated.

Why Google’s stark minimalism won

Google’s minimalist search box looked almost rude compared to the warm tones of Ask. That rudeness hid something far more powerful: an assumption that relevance could be discovered, not simulated.

Google’s strengths:

- Algorithmic scalability. Once you trust link signals and a couple of other features, relevance becomes a mathematical optimization problem-one that scales to billions of pages.

- Simplicity breeds speed. The single-line interface reduces friction. Type less; get more.

- Neutrality-by-design illusion. Google presented itself as objective-ranking based on signals, not personalities.

The minimal UI also masked a vastly more complicated backend, which could be improved iteratively and rewarded with user retention.

Two metaphors: butler vs. referee

Ask Jeeves = butler. Serves. Listens. Tries to interpret intent with courtesy.

Google = referee or scholar. Observes the game. Tallies votes (links). Assigns authority.

But metaphors can mislead. Jeeves suggested empathy and comprehension. Google suggested impartiality. In practice both systems were wrong in different ways: Jeeves overpromised natural understanding; Google underplayed editorial choices and biases embedded in its algorithm.

The broader cultural mirror

Search design didn’t appear in a vacuum. It reflected larger societal moods:

- Late 1990s humanism - There was a strong desire to humanize technology. The web would be friendly, domestic, and conversational. Interfaces tried to bend machines toward human social scripts.

- Early-2000s meritocracy of data - As the web grew, faith in algorithms grew with it. If you could measure it, you could optimize it. The success of PageRank fed cultural narratives that data-driven processes produce fair, objective outcomes.

These trends have political resonance. The butler model promises mediation and human judgment; the algorithmic model promises impartial metrics-and both are seductive because they claim to reduce friction in understanding the world.

A few historical episodes that mattered

- The dot-com era and the usability panic - Early search was noisy; Ask’s pitch to handle messy questions was pragmatic.

- Google’s emergence (late 1990s) - Brin and Page refined link-analysis into a scalable product that outperformed many earlier engines like AltaVista and Lycos [

- The commodification of attention - As ad models matured, search engines’ incentives changed. Ranking became an instrument not just for relevance but for commercial allocation, altering how algorithmic neutrality was perceived.

What each philosophy got right - and wrong

Ask Jeeves got right:

- Human behavior. People ask questions in sentences. Natural language is user-friendly.

- The need to help users express intent.

Ask Jeeves got wrong:

- Overreliance on surface-level parsing and canned heuristics.

- Thinking anthropomorphic UI alone solves semantic understanding.

Google got right:

- Harnessing collective signals-links-to approximate relevance at scale.

- Building an infrastructure that rewarded iterative improvement.

Google got wrong:

- Presenting ranking as neutral while it encodes editorial choices and commercial pressures.

- Creating opaque systems where users had little control or insight into why results appeared.

Where the duel points us now

A funny thing happened: the human-centered vision and the algorithmic vision are converging, but not without friction.

- Conversation resurges. Voice assistants (Siri, Alexa) and chat-based interfaces (chatbots and large language models) owe their popularity to natural language. The Ask Jeeves instinct-to let people ask in plain English-was prescient.

- Under the hood, algorithms still rule. Modern conversational systems are statistical beasts trained on massive corpora; they blend semantic understanding with ranking, filtering, and commercial objectives.

- Trust and explainability are unresolved. Users like asking questions, but they also increasingly distrust opaque rankings and unseen manipulations. The desire for transparency is growing.

Practical implications for designers, policymakers, and users

Designers:

- Build for intent, not just keywords. Let users speak, but design clear affordances so they understand how the system uses their words.

- Combine the conversational front-end with accountable ranking systems. Expose signals where feasible.

Policymakers:

- Recognize that algorithmic ranking is editorial power. Consider rules for transparency, auditability, and redress.

Users:

- Know the trade-offs. Convenience often trades off with control and explainability. If a result appears, it reflects design and commercial decisions, not divine truth.

The future: hybrid humility

The neatest prediction is modest: neither Jeeves nor Google’s worldview will vanish. We will keep the human-facing conversational interfaces because they match how humans think. We will also keep algorithmic ranking because it scales and, usually, it works.

The real challenge is humility.

- Humility from designers - admit that recommendations are not objective facts; provide context and provenance.

- Humility from users - treat search results as graded, not gospel.

We will see more systems that answer in sentences but append a small, perhaps boring, bibliography-links, votes, provenance. A machine will talk like a butler but cite like a scholar.

Final thought

Ask Jeeves taught us to speak like humans. Google taught us to trust the crowd’s structure. Both lessons are correct and incomplete.

If the history of search is any guide, the future will be less about choosing between a butler and a referee and more about asking how to build systems that are both humane and accountable-conversational on the surface, explainable below, and answerable when they are wrong.

For a brisk recap of the technologies and history mentioned above, see Ask.com history [https://en.wikipedia.org/wiki/Ask.com], Google history and PageRank background [https://en.wikipedia.org/wiki/Google] and the original PageRank paper [http://infolab.stanford.edu/~backrub/google.html].